MLOps combines Machine Learning with DevOps principles to integrate SAP Business AI into SAP Cloud ERP processes on SAP BTP, Microsoft Azure or Amazon AWS cloud platforms.

Advanced SAP Business AI solutions have to be integrated into SAP Cloud ERP processes with end-to-end ML DevOps lifecycle management.

Machine Learning DevOps (MLOps) integrates Machine Learning, implemented with Cloud Business AI services, DevOps CI/CD pipelines and operations end-to-end, to streamline delivery processes for SAP Business AI solutions on cloud environments. MLOps on Kubernetes clusters enables implementations with resource intensive training pipelines and scalable productive deployments for inferencing.

Kubernetes clusters are scalable environments available as managed services on Hyperscaler platforms like Azure Kubernetes (AKS) or AWS Elastic Kubernetes (EKS) services. These containerized environments offer features like internal DNS service discovery, load balancing within clusters, automated rollouts and rollbacks, self-healing of failing containers and configuration management.

SAP AI Core on the SAP Business Technology Platform (SAP BTP) is a service to implement SAP AI scenarios for business use-cases with MLOps lifecycle management for training and serving workloads on managed Kubernetes clusters.

Multi-tenancy concepts, with SAP AI Core as tenant-aware BTP reuse service, are realized on different levels. SAP AI Core resource groups separate collections of resources mapped to Kubernetes namespaces for tenant specific workloads. Multi-cloud object stores like AWS S3 or Azure Storage store artifacts like referenced datasets or trained machine learning models.

SAP AI Core MLOps training steps are defined with Argo Workflow templates and orchestrated with Kubernetes container native workflow engines. For this, Argo Workflow controllers manage pods with three containers for each workflow step or Directed Acyclic Graph (DAG) task.

SAP AI Core implements Argo Workflow templates as executables of training pipelines and KServe as inference platform. Both k8s frameworks extend the Kubernetes API with Custom Resource Definitions (CRD) to control training and serving workloads. SAP AI Core applications use ArgoCD to synchronize Argo Workflow and KServe templates, stored in Git repositories, automatically with Kubernetes.

SAP Business AI training workflow processes are integrated into CI/CD pipelines, with Argo Workflow templates created manually or generated from Metaflow pipelines. Argo Workflow behavior can be extended, with the SAP AI Core Metaflow Python plugin, by adding decorators for local deployments or event processing.

The SAP AI Launchpad UI centralizes the MLOps lifecycle management and operations of Business AI scenarios. Some capabilities of the SAP AI Launchpad are monitoring of metrics of foundation models and the uniform integration of LLMs into SAP Business AI applications with the Generative AI Hub.

SAP AI Core offers different options to integrate image processing into business processes with machine learning frameworks or via multi-modal foundation models.

Custom image AI solutions can be implemented with machine learning frameworks like TensorFlow2 or Detectron2 which offer algorithms to detect objects in images with localization and classification capabilities.

The SAP AI Core SDK Computer Vision content package extends Detectron2 with image classification and feature extraction. SAP AI Core Computer Vision implements template driven training pipelines based on metaflow. Computer vision metrics can be visualized using the SAP AI Launchpad, like image classification quality measured e.g. with the Intersection over Union (IoU) to evaluate the inference accuracy.

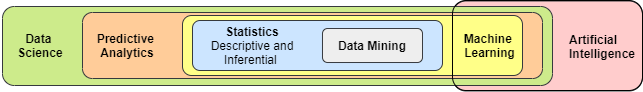

MLOps extends DevOps processes with machine learning steps to train ML models. The Machine Learning (ML) part of MLOps implements data science and software engineering techniques to optimize algorithms of functions which act as inference targets.

Machine Learning (ML) is a subset of Artificial Intelligence (AI) which learns knowledge from data to simulate human intelligence in Business AI processes. Machine learning functions can be combined as steps in SAP Business AI workflow pipelines to realize advanced AI scenarios.

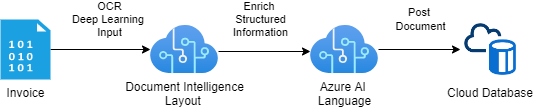

Intelligent Document Processing, visualized in the diagram above, is one popular example of a SAP Business AI pipeline. Pipelines can combine transformation tasks of text or image inputs with natural language processing (NLP) steps. SAP Business AI workflows can automate document processing of many document types in SAP like purchase orders, sales orders or invoice documents.

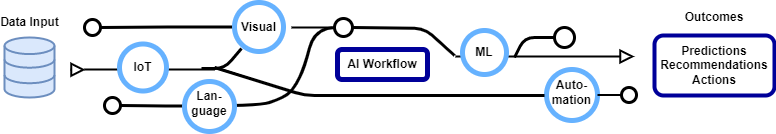

Data-to-value workflows can be empowered with SAP Business AI to improve data-driven decisions with insights based on predictions, recommendations or generated content.

Multi-modal data-to-value SAP Business AI workflows can also extend SAP cloud solutions like SAP S/4HANA Cloud with enterprise automation capabilities.

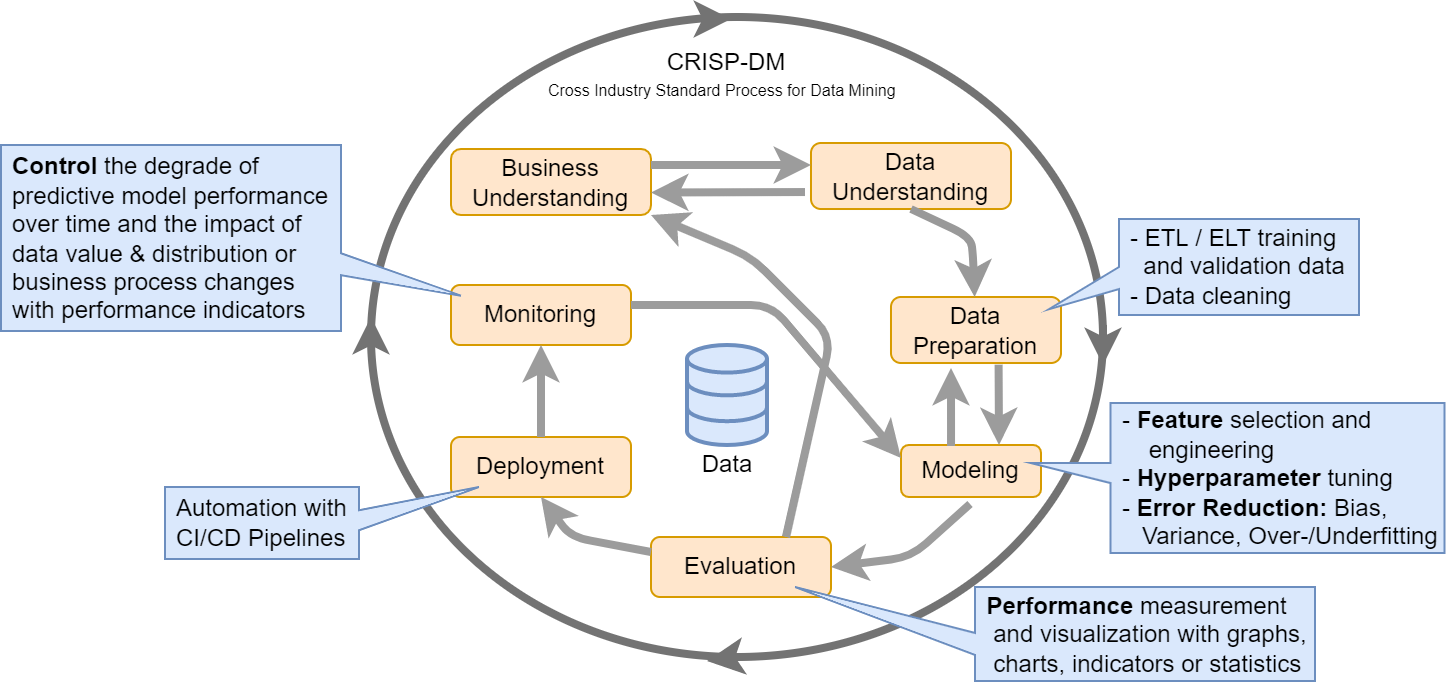

Machine learning integrations into SAP ML DevOps processes can be optimized with the standardized CRISP-DM (CRoss Industry Standard Process for Data Mining) process which defines best practices for model phases from business and data understanding, data modeling and to deployments of interference models.

Machine learning models learn functions to predict target values for input features with trained algorithms. These decision functions can be trained on data supervised with labeled targets or unsupervised for unknown labels.

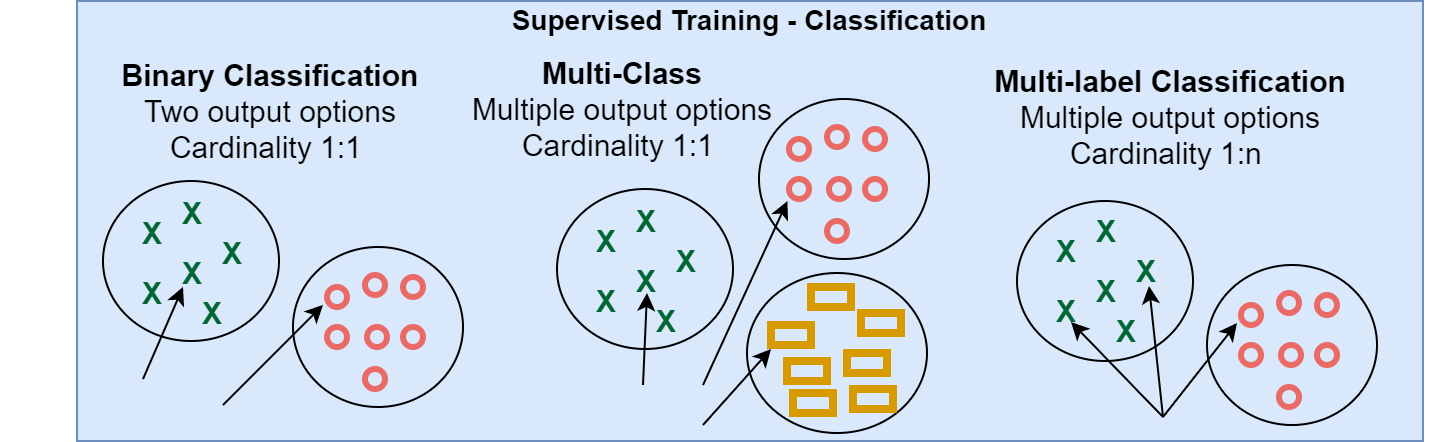

Classification and regression are supervised training methods with discrete classes or continuous numerical values as targets.

Binary and multi-class classification have 2 or more possible outputs and assign one input to exactly one target 1:1. In contrast to multi-label classifications which assign more than one target to one input feature with cardinality 1:n.

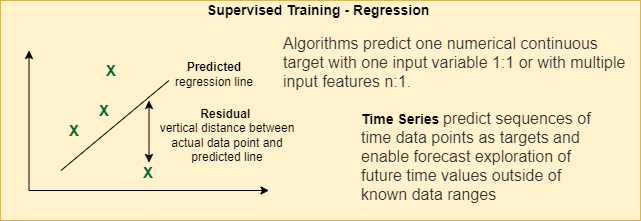

Time series regression methods predict sequences of time data points as targets and enable forecast exploration of future time values outside of known data ranges.

Active learning reduces costs for supervised training and identifies most important cases for labeling manually or with algorithms.

Clustering is a unsupervised training method which groups similar objects together. Because of low memory requirements, K-Means clustering can be used in Big Data scenarios with various data types.

Unsupervised reinforcement training learns with rewards offered by agents as positive feedback for correct actions.

Unsupervised training with Negative Sampling creates negative samples out of true positive instances.

Deep Learning is a subset of machine learning which simplifies human brain processing with artificial neural networks (algorithms) to solve a variety of supervised and unsupervised machine learning tasks. Deep Learning automates feature engineering and processes non-linear, complex correlations.

Artificial Neural Networks (ANN) are composed of three layers to process tabular data with activation functions and learning weights.

Recurrent Neural Networks (RNN) understand sequential information and are able to process input to output data stepwise.

Convolutional Neural Networks (CNN) are able to extract spatial features in image processing tasks like recognition or classification.

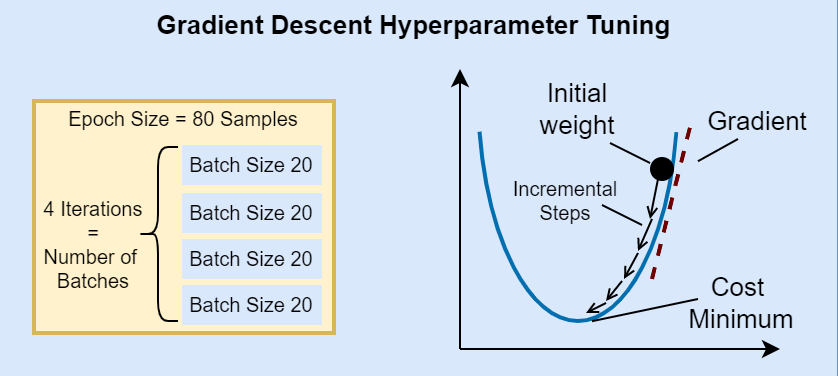

The accuracy and resource requirements of Machine learning algorithms can be optimzied with Hyperparaneter tuning in the modeling phase.

Gradient Descent tuning as example uses Epoch and Batch Hyperparameters to optimize internal model weights of the iterative machine learning optimization algorithm. The model optimization can be measured with a cost function which measures the difference between actual values and predicted values.

Batch sizes define the number of samples, single row of data, which have to be processed before the model is updated. Epochs represent complete forward or backward passes through the complete dataset. Training with smaller batch sizes require less memory, update weights more frequently, with less accurate estimates of the gradient compared to gradients of full batch trainings. Gradient descent variants are batch of all samples in single training set, mini batch and stochastic using one sample per step.