Cloud architecture best practices help to implement hybrid multi-cloud enterprise solutions with cloud services on business technology platforms like SAP BTP, Amazon AWS or Microsoft Azure.

Innovative Multi-Cloud Architectures with Services on SAP & Azure & AWS

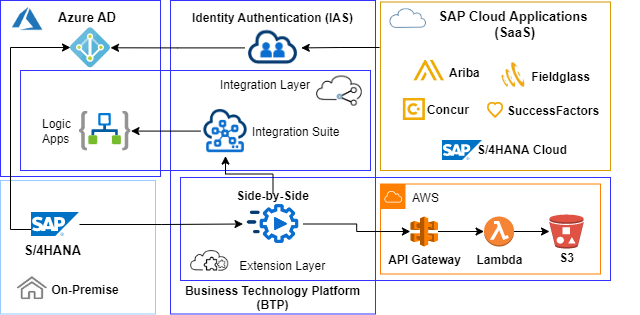

SAP cloud reference architectures are built on the SAP Cloud Business Technology Platform (BTP) foundation with Cloud Computing and Extensibility, Integration, Network and Security, Data-to-Value pillars.

These pillars can be extended with services of other cloud platforms to realize multi-cloud layers for extension, integration, machine-learning or data-driven scenarios.

Multi-cloud architecture best practices for resilient workloads describe design patterns to implement cloud qualities like scalability, elasticity and high availability on application, integration, network, storage or compute layers. Best practices for cloud implementations are similar on SAP and hyperscaler cloud platforms but differ in the availability of services and control options.

Loosely coupled containers or virtual machines offer implementation options to implement these cloud qualities on the cloud compute layer. Serverless application design pattern reduce the complexity and operational effort of workload management for applications running in managed, stateless compute containers with integrated monitoring and logging.

The implementation of network and security best practices protects SAP enterprise cloud workloads on integrated multi-cloud platforms.

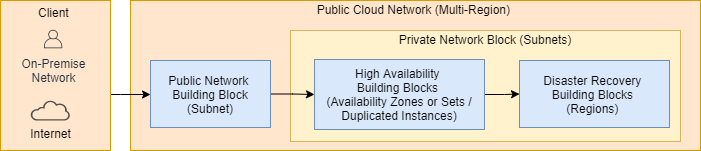

Architectural Building Blocks (ABB) on cloud platforms are mainly separated on virtual network level. The simplified solution context diagram below visualizes main building blocks of SAP deployments on hyperscaler platforms (Amazon AWS, Microsoft Azure, Google Cloud Platform) to realize secure, high available and fault-tolerant workloads.

These architectural building blocks can be designed on virtual network or subnet level with hyperscaler compute, storage and network resources.

SAP cloud deployments have to be accessed from on-premise and over the public internet. Single points of entry minimize attack options and avoid many-to-many connections between on-premise and cloud building blocks.

Dedicated connections between on-premise and cloud environments offer access without public internet traffic. TLS/IPsec VPN connections can be used as alternative or additionally to dedicated connections to encrypt cloud traffic on network (TLS) or IP (IPsec) layer.

Hub-spoke topologies enable to implement single public entry points to private network areas. Public (hub) building blocks, accessible from on-premise or over the public internet, are peered to multiple private accessible cloud virtual networks (spokes).

Different virtual network components can be connected with network peering concepts on hyperscaler platforms like Microsoft Azure or Amazon AWS.

Virtual hub network areas are shared public entry points to the cloud environment, connected with routing configuration to private, only internally accessible spoke subnets with resources and services. Central entry points enable to define corporate security guidelines with rules or policies and to control or inspect traffic (ingress, egress) with network virtual appliance (NVA, Firewall) or gateways (NAT, Internet, VPN).

Hub network components can host shared backup services like domain controllers, flow control services, installation or administration services (like DNS, NTP, IDP, AD DS, PKI for SSO) to reduce subscriptions for these management resources and to separate concerns between management and workload resources. SAP support access has to be enabled via a public or elastic (AWS) SAProuter IP on the Hub.

Bastion hosts or jump boxes (servers, hosts) separate hub networks with SSL secured RDP or SSH access options and allow to jump to access limited internal resources. In contrast to bastion hosts, jump boxes are not accessible from the public internet.

Cloud compute shall automatically handle changing demand with scalable and elastic workloads. Available scale-up and scale-out options have to be evaluated for each cloud infrastructure.

Elastic computing environments handle demand changes e.g. for SAP primary (PAS) or additional application servers (AAS) automatically. Autoscaling can be implemented based on performance metrics captured with Azure Enhanced Monitoring Extensions or AWS Data Providers for SAP. In addition, SAP provides the snapshot monitoring (SMON) utility with SAP specific performance metrics in a table to be accessed via OData or RFC.

High available workloads have to be deployed redundant across areas like availability zones or sets, interconnected with low latency and bandwidth. Load balancers distribute traffic with rules between these availability zones (AWS, Azure) or sets (Azure).

High availability SLAs offered by Azure are 99,9% for single VM, Availability Set 99,95% and AZ 99,99%.

HANA System Replication (HSR) enables high availability for SAP HANA instances with redundancy. Synchronous replication modes depend on network latency for redo log shipping. SAP offers NIPING to identify the transfer performance between availability zones.

High availability of HANA Large instances can be improved by extensions provided e.g. By SUSE or RedHat.

Hybrid multi-cloud disaster recovery strategies are based on custom recovery plans implemented with different strategies for cloud and on-premise workloads. Metrics like mean time to repair (MTTR) and mean time between failures (MTBF) are performance and resilience indicators of cloud systems.

Recovery planning has to consider service level agreements (SLA) like the acceptable maximum service interruption (Recovery Time Objective - RTO) and loss of data (Recovery Point Objective - RPO). Providing lower RTO and RPO cost more in terms of spend on resources and operational complexity.

Some recovery strategies implement active/active or active/passive setups, with defined recovery time and point objectives. Passive or standby workloads get activated in case of disaster events.

Recovery strategies can be categorized into:

Virtual IP adresses do not correspond to single hardware devices and enable failover scenarios. Examples are, AWS Overlay IPs defined on VPC level to be used across subnets and Floating IPs of Azure load balancer rules to reassign IPs from failed to other nodes (passive or warm).

Hyperscaler services like AWS Elastic Discovery Service and Azure Site Recovery Service are solutions to implement DR strategies.

SAP HANA database recovery offers synchronous and asynchronous system replication between isolated, geographically independent network areas. Synchronous replication is only possible between availability zones with low latency.

Hyperscaler Backup services implement backup solutions for SAP HANA databases like AWS Backint certified for HANA Backint, Azure Backup on VMs certified for HANA Backint or certified HANA Backint third party solution or storage snapshots on HANA Large Instances.

Connections between on-premise, data-centers and cloud environments have to implement security standards and have high performance requirements. Connection targets are virtual interfaces or public service endpoints on SAP BTP AWS Azure hybrid multi-cloud business technology platforms.

Hyperscaler offer connection options like dedicated connections, VPN (Point-To-Point, Site-to-Site) or virtual WAN. Site-to-Site (S2S) are suitable for PoC, development/sandbox environments and as backup or disaster recovery option.

Dedicated connections are private connections to hyperscaler cloud environments with guaranteed bandwidth with enhanced security and better performance than public internet connections. Dedicated connections are offered by Azure/AWS for single customers (Express Route direct, AWS Direct Connect) or by partners (Express Route, hosted AWS Direct Connections). Azure ExpressRoute and AWS Direct Connect use single mode fiber cables, which support long distances with fast transmission.

Azure automatically creates VMs with routing tables for ExpressRoute virtual gateway types with filters to limit the consumable services of Azure or Microsoft Office domains.

Dedicated solutions implement the Border Gateway Protocol (BGP) to route traffic efficiently across autonomous systems (AS) which are groups of routers uniquely identified by numbers (ASN).

Peers, communities and tags are some BGP elements. Peers represent two routers establishing TCP connections and exchanging routing information via sessions. Communities group IP prefixes with tags, assigned to virtual networks in each region, to identify and filter cloud traffic origins by on-premise route filters.

Encryption can be implemented on different OSI connection layers with encryption options like MACsec and IPsec, typically used for VPN connections, or combinations.

MACsec encryption offers better scalability and performance for Ethernet (LAN) and point-to-point connectivity than IPsec to protect network-to-network or device-to-network connections. MACsec encryption is available with ExpressRoute Direct or AWS Direct Connect.

Hyperscaler offer options like Private Links to control traffic to resources of service providers with private connections from virtual networks (on-premise or peered).

AWS PrivateLink endpoints are created Service Enpoints with Elastic Network Interfaces (ENI) on service consumer VPCs for private connections to resources.

Azure Service Endpoints allow to restrict traffic from private subnet endpoints of Azure vNets to resources outside the vNet. Private Links assign private virtual network IPs to PaaS resources.

Virtual appliances are often used to inspect and audit outbound network traffic.

Deployments of SAP workloads on hyperscaler environments have to consider performance requirements and costs. Workload segregation on subnet level avoids higher costs for traffic between virtual networks. The network design shall consider bandwidth metered on egress (outbound) traffics for needed network connections.

Accelerated (Azure) or enhanced (AWS) networking with single root I/O virtualization (SR-IOV) to VM NICs, offers high-performance path bypasses of host servers with reduction of latency, jitter, and CPU utilization e.g. to reduce SAP application server to database server latency, which can be analyzed with the ABAPmeter tool.

Some cost saving options are usage commitments with AWS Cost Saving Plans or Azure Reserved Instances, start-stop automation of HANA based SAP systems with AWS Systems Manager automation or Azure Runbook and stopping (deallocating) of not needed VMs.

SAP HANA Databases can be deployed with conversion and lift-and-shift migration to hyperscaler platforms. Conversions from any DB can be performed as classical S/4HANA migration with system update/upgrade followed by DB migration to HANA. The SUM Database Migration Option (DMO) supports system move (changing application server), with transfer of export files to Hyperscaler, and pipe-based DMO to Azure / Hyperscaler, over WAN MPLS (Multiprotocol Label Switching) connections migration, scenarios.

Supported HANA Lift and Shift scenarios are HANA System Replication (HSR), homogeneous system copy, HANA backup and restore and Near Zero Downtime (NZDT) with clone system.

Migrations of SAP HANA systems have to be planned with sizing report /SDF/HDB_SIZING for existing SAP environments or with the HANA QuickSizer tool for Greenfield installations.

SAP HANA tailored data center integration (TDI) enables HANA installations with cloud compute, storage and network components. Cloud storage needs to be replicated on compute instances with sufficient IOPS and high throughput, enabled e.g. on RAID-0 striped volumes with redundancy implemented on storage not on RAID level.

Scaling up HANA databases offer some performance advantages and easier management than multiple cluster nodes in scale out scenarios. Scaling out of HTAP (Hybrid Transactional Analytical Processing) databases with OLTP and OLAP has to be planned e.g. how nodes are distributed over availability zones.

Data and log storage areas have to be separated completely for each HANA node (master + worker). Shared binary, trace and configuration or backup files can be mounted via NFS to each HANA cluster node.

SAP HANA Dynamic Tiering is available on dedicated hosts to extend memory with disk-based columnar store. Dynamic Tiering manages less frequently accessed (warm) data for native SAP HANA and is not available for S/4HANA and BW/4HANA, which use Data Aging strategies instead.

Large HANA instances can be deployed on non elastic bare metal servers, to overcome virtual machine limits. These hardware resources components are provided dedicated for customers with big sized databases (> 4 TB).

The following table lists some Solution Building Blocks (SBB) as part of reference cloud architectures for SAP workloads on hyperscaler platforms like Microsoft Azure, Amazon AWS and Google Cloud Platform (GCP):

| SBB | S/4HANA on Azure | S/4HANA on AWS | S/4HANA on GCP |

|---|---|---|---|

| Public Hub | |||

| Private Connectivity | Azure ExpressRoute | Direct Connect with VPN Gateway | Cloud Interconnect with VPN Gateway |

| Gateways | Application / VPN | Internet / VPN | Internet / VPN |

| Hub Network | Azure VNet | public subnet | public subnet |

| Hub Components | VPN Gateway, Azure Firewall, Azure Bastion | AWS Transit Gateway, Bastion Host | GCP Network Peering, VM with Identity-Aware Proxy as Bastion host |

| Private Spokes | |||

| Compute Engine | Azure VM | Amazon EC2 | Google GCE |

| Database | RDS recovery combines daily backup and transaction logs stored periodically each 5 min | ||

| Storage | Azure Managed Disks | AWS S3 and AWS Glacier (Standard RTO 3-5 h) | Google Cloud Storage |

| Deployment automation | ARM templates | AWS Launch Wizard guided deployment | Cloud Deployment Manager |

These building blocks get deployed with tools or scripts to ensure reproducibility and to facilitate governance.